Page History

| Table of Contents | ||

|---|---|---|

|

Purpose

This report enables you to extract details of a Test Execution, such as the Tests that are part of it, Defects, Requirements and iterations details, so that you can generate a document report focusing in what matter the most for your team, or even share it with someone else that hasn't access to Jira.

...

- see all the requirements covered by the Tests in the Test Execution

- see all the defects linked to the Tests in this Test Execution

- see an overall status summary of the Test Execution

- check a specific detail of a Test Runs (like evidences, attachments, assignee, etc)

Output Example(s)

The following screenshots shows an example of the sections you should expect in this report.

How to use

This report can be generated from the Issue details screen.

...

| Info | ||

|---|---|---|

| ||

| General information about all the existing places available to export from, and how to perform it, is available in the Exporting page. |

Source data

This report is applicable to:

- 1 Test Execution issue

Output format

The standard output format is .DOCX, so you can open it in Microsoft Word, Google Docs, and other tools compatible with this format.

Report assumptions

The template has a set of assumptions that you make sure that your Jira/Xray environment complies with:

...

If any of these assumptions is not met, you need to update the template accordingly.

Usage examples

Export all details obtained in the context of a given Test Execution

- open the Test Execution issue and export it using this template

Understanding the report

The report shows detailed information about the Test Execution provided.

Layout

The report is composed by several sections. Two major sections are available: Introduction and Test Runs details.

By default, and to avoid overload/redundancy of information, only the "Introduction" section will be rendered; you can change this behavior on the template (more info ahead).

"Introduction" section

This section is divided into 6 sub-sections to have an overview about the Test Plan we have just exported:

...

Each of these sections is explained below.

Document Overview

Brief description of what you will find in this report and how it was generated.

Test Execution Details

In this section we are extracting the Test Plan key in the header and show the Begin and End Date (formatted as demonstrated below), the Summary and the Description present in the Test Plan.

...

The output will have the following information, notice that as the Description field support wiki markup we are using "wiki:" keyword so that it is correctly interpreted.

Requirements covered by the Tests in this Test Execution

In this section we have an overview of all the requirements that are covered by Tests in this Test Plan, we extract the Key, Summary, Workflow and the Test Status Status removing all the repeated entries.

...

The requirements are listed in a table with the informations explained above.

Overall Execution Status

As the name suggests we have an overview about the executions of the Tests in this Test Execution, here you will have information about how many Test Runs you have in this Test Execution and what are the statuses of their executions.

...

This will produce the following output:

Defects

In this section we are listing all the defects found that are associated with this Test Execution, we consider defects associated with TestRuns, defects in Test Steps or defects found during the iterations. We do not print duplicates.

...

The Defects appear in the document as a table with information regarding the defects found during the executions of the Test Execution.

Test Runs

In this section we have a table with information regarding the Test Runs in this Test Execution. You can find the following information about each Test Run:

...

This information is presented in a table as we can see below:

Some particularities to highlight a different behavior about the code needed to show the Tests Runs section:

- Usage of ${fullname:Tests[n].AssigneeId}, this allows us to fetch the full name of the assignee instead of the key associated to it.

Test Run Details

This section will gather all the information related to each Test Run of each Test in the Test Execution with all the possible details.

It is composed with several sub-sections that will be filled with information if it is available or be filled with a message showing that no information is available.

Test Executions Summary

This section have a table with information regarding each Test Run in this Test Execution (and will repeat these sections for each Test Run). The information is presented as a table with the following fields:

...

All of these fields have code to handle empty fields. The resulting table look like the one below.

Execution Defects

If any Defects was found and associated globally with a Test Run it will appear here in the form of a table with the following fields:

...

The table will be similar to the one below.

Execution Evidences

If any Evidence was attached to the TestRun we are showing it in table with the FileName.

...

The table in case of an Evidence is of the type image will have the following aspect:

Comment

The comment associated to the TestRun (${wiki:TestRuns[n].Comment}).

Test Description

The description of the TestRun (${wiki:TestRuns[n].Description}).

Test Issue Attachments

This section only appears if you have any attachments associated to the Test Run.

...

This appears in the document in a table form:

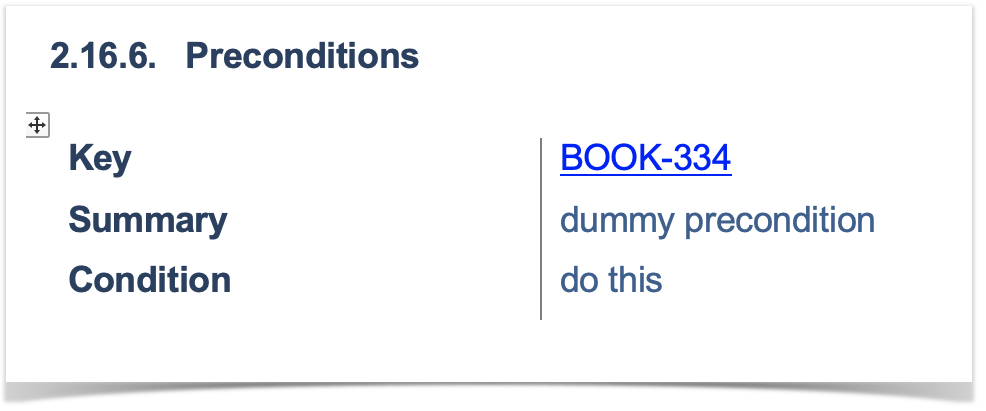

Preconditions

This section only appear if you have a Precondition associated with the TestRun.

...

A sub section will appear with the preconditions definitions.

Parameters

This section lists the existing parameters of the TestRun (we are iterating through the Parameters of the TestRun with: #{for m=TestRuns[n].ParametersCount}).

...

It will list the Key and the Value of each parameter in a table.

Iterations

This section uses a sentence to show how many interactions we will go into more details in the next sections.

...

A sentence is added to the document with this information.

Iteration Overall Execution Status

To obtain the overall execution status of the iteration we use two variables:

...

The above code will produce the below table.

Test Run details

In this section we are showing the Test Run details with the Name, Status and Parameters.

...

This section will have the below appearance:

Iteration precondition definition

If a precondition is present we will use the following fields to extract that information:

...

This will produce an entry like the one below:

Iteration parameters details

For that given Iteration we are listing the parameters used, that information is extracted with the following fields:

...

It generates a table of the following form:

Iteration Test Step Details

In this section we are listing the details of an iteration, we are listing each step present with details, the code we use for that purpose is present in the below table.

...

The above information is gathered in a table like the one below:

Test Details

This section shows the Test details, for that we are considering the different possible Tests we can have in Xray: Generic, Manual and Cucumber. For each type we will fetch different information.

...

| Type | Key | Description | Sample Code | Output |

|---|---|---|---|---|

| Generic | Test Type | Test Type field | ${TestRuns[n].Test Type} | |

| Specification | Definition of the Generic test | ${TestRuns[n].Generic Test Definition} | ||

| Cucumber | Test Type | Test Type field | ${TestRuns[n].Test Type} | |

| Gherkin Specification | Gherkin specification of the Test | ${TestRuns[n].Cucumber Scenario} | ||

| Manual | Step | Step Number | ${TestRuns[a].TestSteps[r].StepNumber} | |

| Action | Action of the Test Step | ${TestRuns[a].TestSteps[r].Action} | ||

| Data | Data of the Test Step | ${TestRuns[a].TestSteps[r].Data} | ||

| Expected Result | Expected Result of the Test Step | ${TestRuns[a].TestSteps[r].ExpectedResult} | ||

| Attachments | Attachment of the Test Step | ${TestRuns[a].TestSteps[r].Attachments[sa].FileURL} !{${TestRuns[n].TestSteps[r].Attachments[sa].Attachment}|width=100} | ||

| Comment | Comment of the Test Step | ${wiki:TestRuns[a].TestSteps[r].Comment} | ||

| Defects | Defects associated with the Test Step | ${TestRuns[a].TestSteps[r].Defects[dc].Key} | ||

| Evidence | Evidence with the Test Step | ${TestRuns[a].TestSteps[r].Evidences[e].FileURL}} !${TestRuns[a].TestSteps[r].Evidences[e].Evidence|maxwidth=100} | ||

| Status | Status of the Test Step | ${TestRuns[a].TestSteps[r].Status} |

Requirements linked with this test

For each Test we are listing the Requirements linked

...

This section present a table with that information like the one below:

Appendix A: Approval

This section is added for the cases where you need to have a signature validating the document.

Customizing the report

Sections that can be hidden or shown

The report has some variables/flags that can be used to show or hide some sections whose logic is already implemented in the template.

...

| Variable/flag | Purpose | default | example(s) |

|---|---|---|---|

showTestRunDetails | render this the details section

| 0 | ${set(showTestRunDetails, 0)} |

| showTestRunEvidences | render this section

(Make sure to define showTestRunDetails at 1) | 0 | ${set(showTestRunEvidences, 0)} |

| showTestRunAttachments | render this section

(Make sure to define showTestRunDetails at 1) | 0 | ${set(showTestRunAttachments, 0)} |

| showTestRunIterations | render this section

(Make sure to define showTestRunDetails at 1) | 0 | ${set(showTestRunIterations, 0)} |

Adding or removing information to/from the report

As this report is a document with different sections, if some sections are not relevant to you, you should be able to simply delete them. Make sure that no temporary variables are created in that section that are used in other subsequent sections or if any all conditional blocks are properly closed.

...

The later may be harder to implement, so we won't consider them here.

Exercise: add a field from the related Test issue

Let's say we have a "Severity" field on the Defect that is connected to the Test Execution, and that we want to show it on the report.

...

- insert new column in the table

- on the "Tests Details" section,

- copy "Comment" (i.e., insert a column next to it and copy the values from the existing "Comment" column)

- change

- ${wiki:TestRuns[n].TestSteps[r].Comment} to ${TestRuns[n].TestSteps[r].Severity}

Performance

Performance can be impacted by the information that is rendered and by how that information is collected/processed.

...

| Info | ||

|---|---|---|

| ||

|

Known limitations

- Test Execution comments are not formatted

- Gherkin Scenario Outlines are not considered as data-driven (i.e., only one Test Run will appear)

...