Page History

...

With AltWalker, automation code related to our model can be implemented in Python, C#, or other. In this approach, GraphWalker is only responsible for generating the path through the modelsçmodels.

Let's clarify Starting by clarifing some key concepts, using the information provided by GraphWalker's documentation that explains them clearly:

...

In MBT, especially in the case of State Transition Model-Based Testing, we start from a given vertex but then the path, that describes the sequence of edges and vertices visited, can be quite different each time the tool generates it. Besides, the The stop condition is not composed by one or more well-known and fixed expectations; it's based on some more graph/model related criteria.

When we "execute the model," , it will walk the path (i.e. go over from vertex to vertex through a given edge) and performing perform checks in the vertices. If those checks are successful until the stop condition(s) is achiviedachieved, we can say that it was successful; otherwise, the model is not a good representation of the system as it is and we can say that it "failed.".

Example

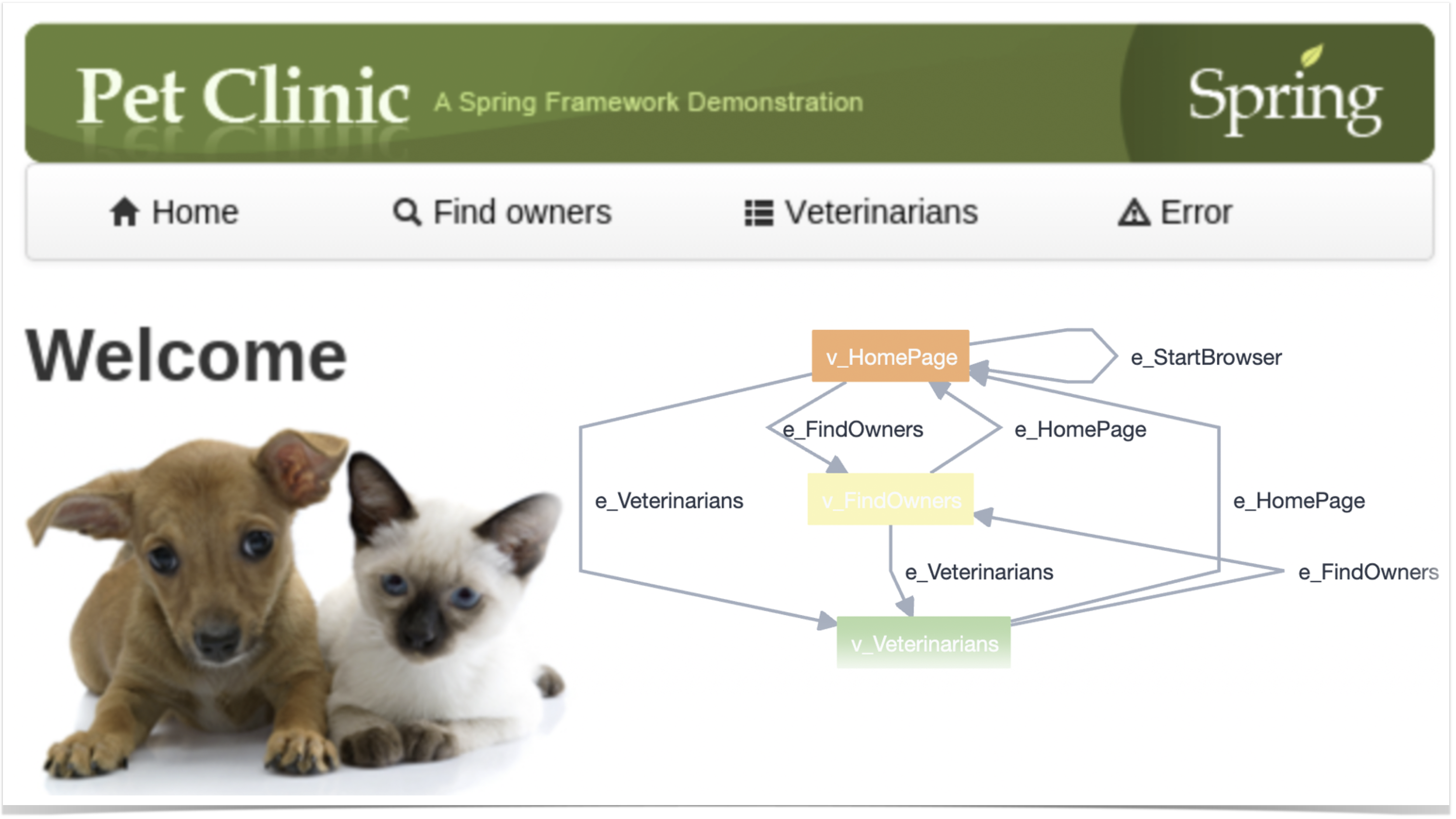

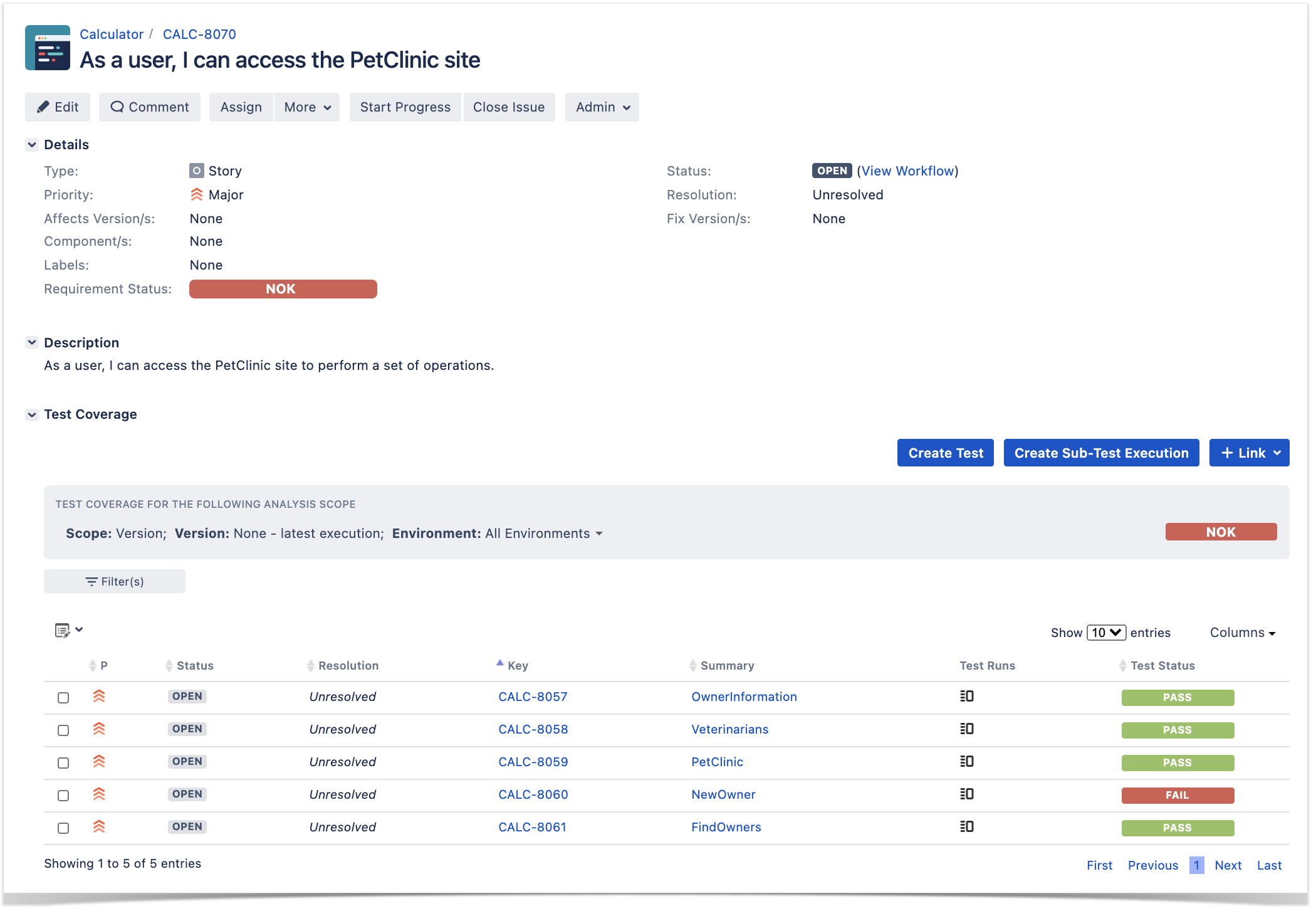

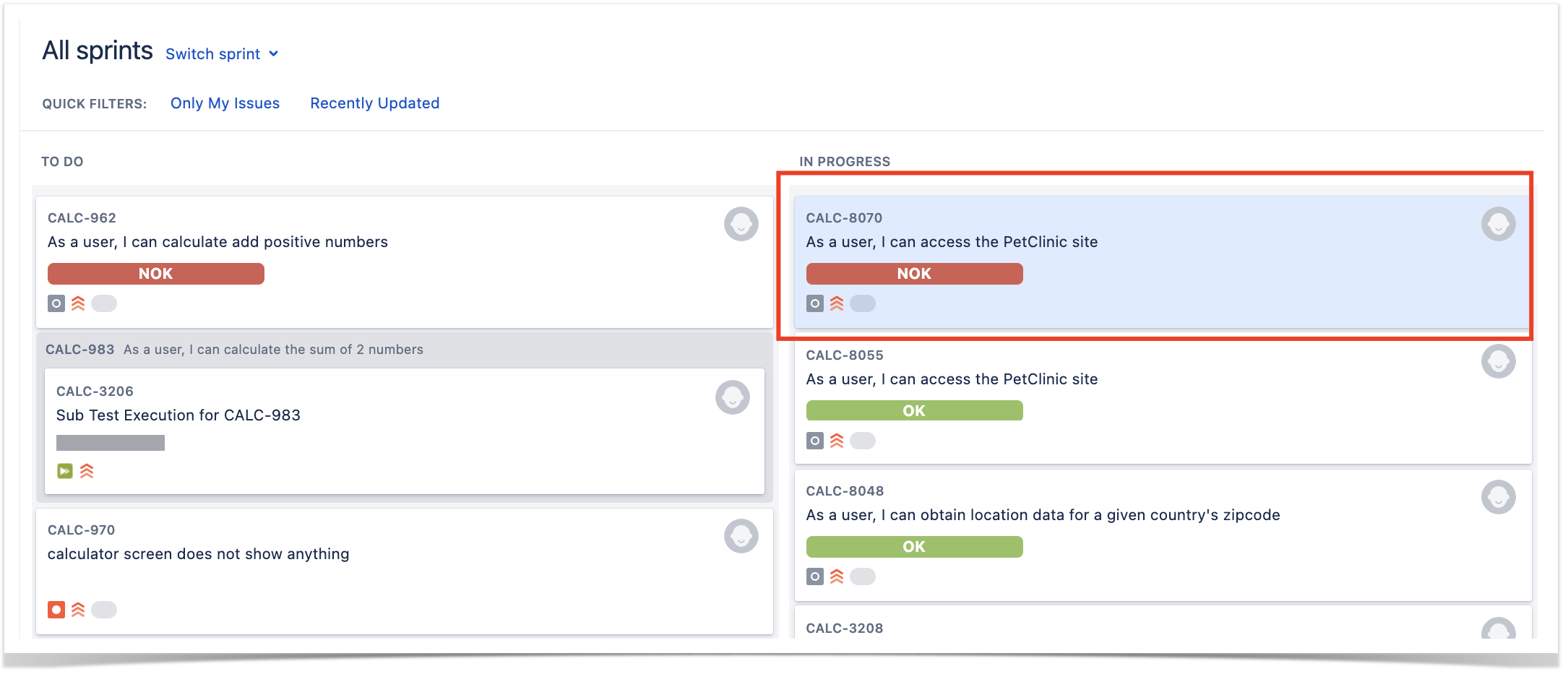

In this This tutorial , is based on an example provided by the GraphWalker community (please check GraphWalker wiki page describing it) which targets the well-known PetClinic sample site.

...

As mentioned earlier, models can be built using AltWalker's Model-Editor (or GraphWalker Studio) or directly in the IDE (for VSCode there's a useful extension to preview it). In the visual editors, namelly namely in AltWalker's Model-Editor, we can use it to load previously saved model(s) like the ones in petclinic_full.json. In this case, the JSON file contains several models; we could also have one JSON file per model.

...

| Info | ||

|---|---|---|

| ||

As detailed in AltWalker's documentation, if we start from scratch (i.e. without a model), we can initialize a project for our automation code using something like: $ altwalker init -l python test-project When we have the model, we can generate the test package containing a skeleton for the underlying test code. $ altwalker generate -l python path/for/test-project/ -m path/to/models.json If we do have a model, then we can pass it to the initialization command: $ altwalker init -l python test-project -m path/to/model-name.json During implementation, we can check our model for issues/inconsistencies, just from a modeling perspective: $ altwalker check -m path/to/model-name.json "random(vertex_coverage(100))" We can also check verify if the test package contains the implementation of the code related to the vertices and edges. $ altwalker verify -m path/to/model-name.json tests Check the full syntax of AltWalker's CLI (i.e. "altwalker") for additional details. |

...

. |

The main test package is stored in tests/test.py. The implementation follows the Page Objects Model using pypom package and each page is stored in a proper class under a specific pages directory.

...

| Code Block | ||||

|---|---|---|---|---|

| ||||

from altwalker.planner import create_planner

from altwalker.executor import create_executor

from altwalker.walker import create_walker

from custom_junit_reporter import CustomJunitReporter

import sys

import pdb

import click

def _percentege_color(percentage):

if percentage < 50:

return "red"

if percentage < 80:

return "yellow"

return "green"

def _style_percentage(percentege):

return click.style("{}%".format(percentege), fg=_percentege_color(percentege))

def _style_fail(number):

color = "red" if number > 0 else "green"

return click.style(str(number), fg=color)

def _echo_stat(title, value, indent=2):

title = " " * indent + title.ljust(30, ".")

value = str(value).rjust(15, ".")

click.echo(title + value)

def _echo_statistics(statistics):

"""Pretty-print statistics."""

click.echo("Statistics:")

click.echo()

total_models = statistics["totalNumberOfModels"]

completed_models = statistics["totalCompletedNumberOfModels"]

model_coverage = _style_percentage(completed_models * 100 // total_models)

_echo_stat("Model Coverage", model_coverage)

_echo_stat("Number of Models", click.style(str(total_models), fg="white"))

_echo_stat("Completed Models", click.style(str(completed_models), fg="white"))

_echo_stat("Failed Models", _style_fail(statistics["totalFailedNumberOfModels"]))

_echo_stat("Incomplete Models", _style_fail(statistics["totalIncompleteNumberOfModels"]))

_echo_stat("Not Executed Models", _style_fail(statistics["totalNotExecutedNumberOfModels"]))

click.echo()

debugger = pdb.Pdb(skip=['altwalker.*'], stdout=sys.stdout)

reporter = None

if __name__ == "__main__":

try:

planner = None

executor = None

statistics = {}

models = [("models/petclinic_full.json","random(vertex_coverage(100))")]

steps = None

graphwalker_port = 5000

start_element=None

url="http://localhost:5000/"

verbose=False

unvisited=False

blocked=False

tests = "tests"

executor_type = "python"

planner = create_planner(models=models, steps=steps, port=graphwalker_port, start_element=start_element,

verbose=True, unvisited=unvisited, blocked=blocked)

executor = create_executor(tests, executor_type, url=url)

reporter = CustomJunitReporter()

walker = create_walker(planner, executor, reporter=reporter)

walker.run()

statistics = planner.get_statistics()

finally:

print(statistics)

_echo_statistics(statistics)

reporter.set_statistics(statistics)

junit_report = reporter.to_xml_string()

print(junit_report)

with open('output.xml', 'w') as f:

f.write(junit_report)

with open('output_allinone.xml', 'w') as f:

f.write(reporter.to_xml_string(generate_single_testcase=True, single_testcase_name="PetClinicAllinOne"))

#debugger.set_trace()

if planner:

planner.kill()

if executor:

executor.kill()

|

It This code makes use of a custom reporter that can generate JUnit XML reports in two different ways:

...

| Code Block | ||||

|---|---|---|---|---|

| ||||

#!/bin/bash # if you wish to map the whole run to single Test in Xray/Jira REPORT#REPORT_FILE=output_allinone.xml # if you wish to map each model as a separate Test in Xray/Jira #REPORTREPORT_FILE=output.xml curl -H "Content-Type: multipart/form-data" -u admin:admin -F "file=@$REPORT_FILE" http://192jiraserver.168example.56.102com/rest/raven/1.0/import/execution/junit?projectKey=CALC |

...

- Use MBT not to replace existing test scripts but in cases where yoou you need to provide greater coverage

- Discuss the model(s) with the team and the ones that can be most useful valuable for your use case

- Multiple runs of your tests can be grouped and consolidate consolidated in a Test Plan, so you can have an updated overview of their current state

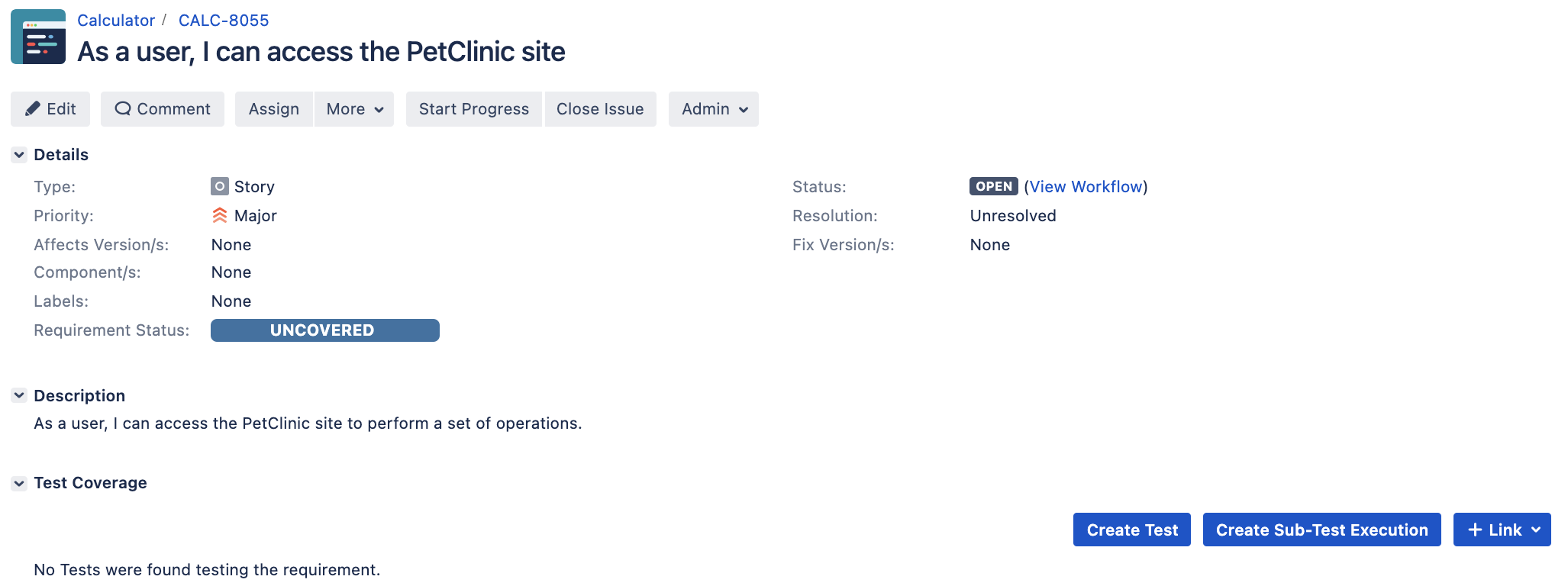

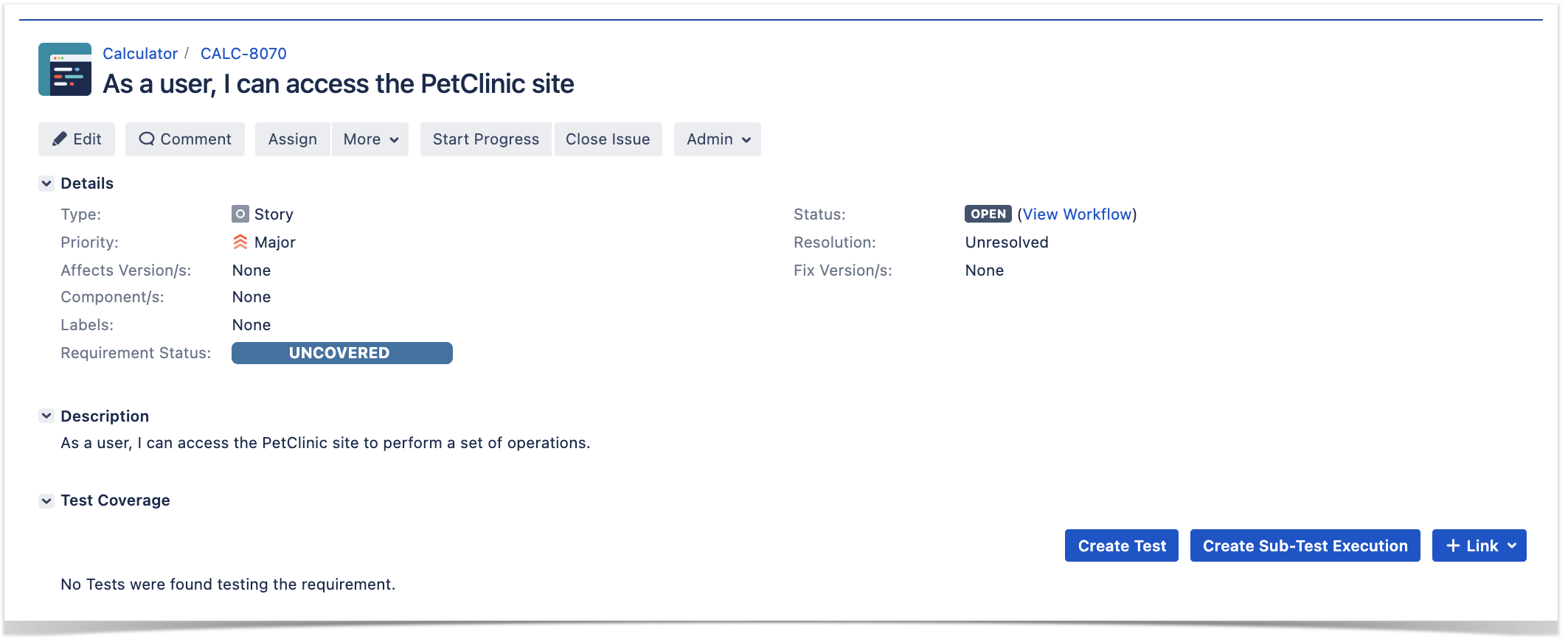

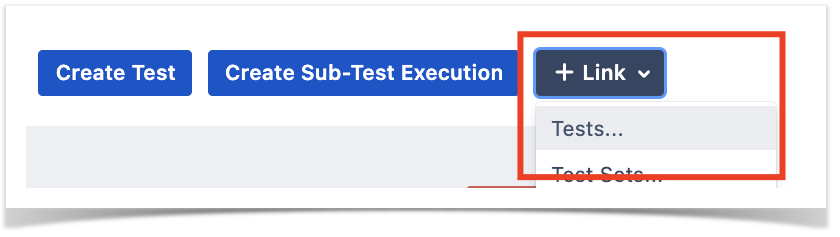

- After importing the results, you can link the corresponding Test issues with an existing requirement or user story and thus truck coverage directly on the respective issue, or even on an Agile board

References

- AltWalker

- Visual model editor for AltWalker and GraphWalker

- AltWalker Model Visualizer for VSCode

- Actions and Guards (from AltWalker's documentation)

- AltWalker examples (Python and C#/.NET)

- AltWalker CLI

- Port of PetClinic MBT example to AltWalker and Python (code for this tutorial)

- GraphWalker models for testing the PetClinic site (source-code)

...