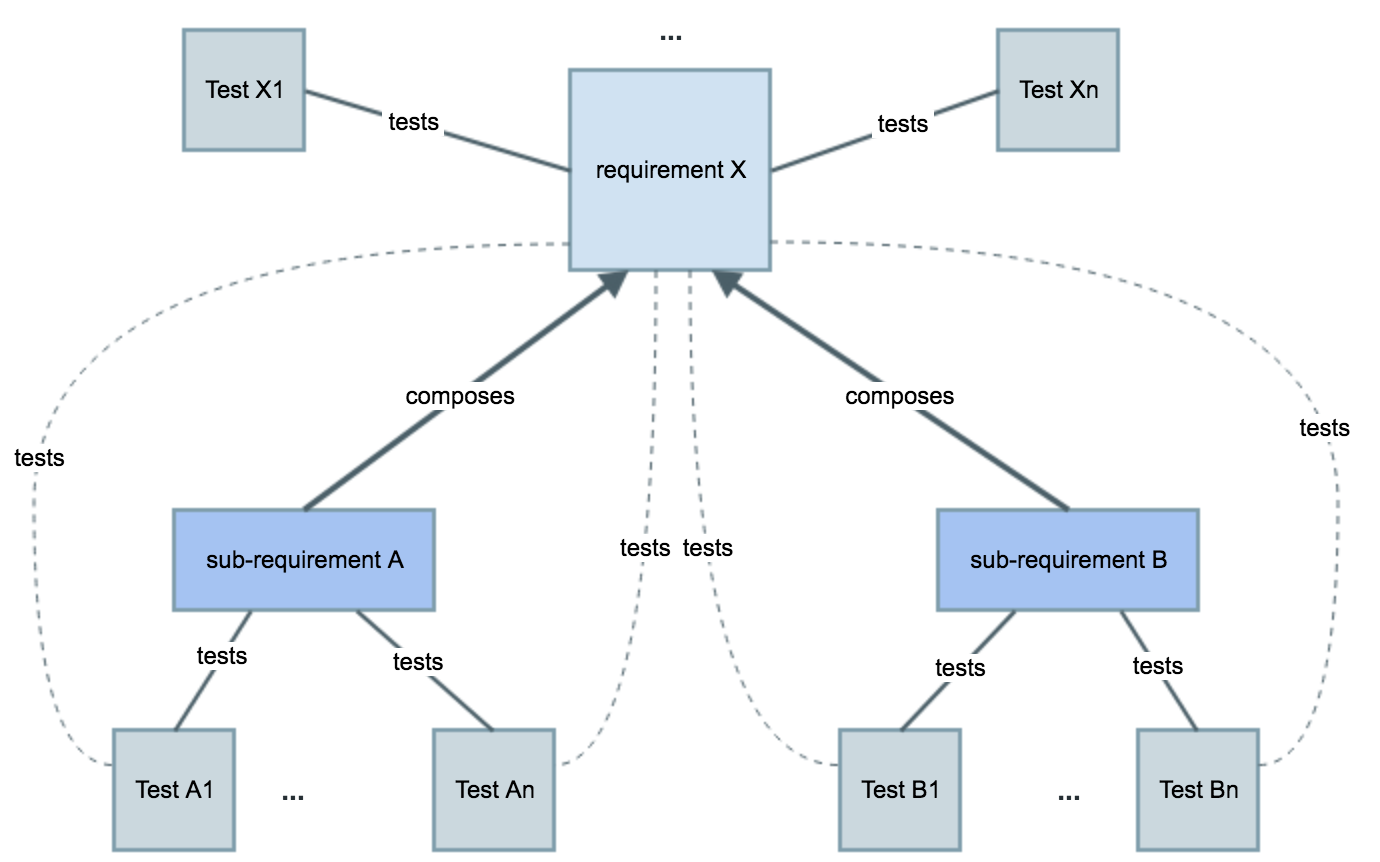

A requirement may be either covered by one or multiple Tests. In fact, the status of a given requirement goes way further than the basic covered/not covered information: it will take into account your test results.

As soon as you start running your Tests, the individual test result may be one of many and be very specific to your use case.

To make your analysis even more complex, you may be using sub-requirements and executing related Tests.

Requirements may be validated directly or indirectly, through the related sub-requirements and associated Test cases.

Thus, how do all these factors contribute to the calculation of a requirement status? How is evaluated the status of some Test?

Let’s start by detailing the different possible values Test, Test Step and requirement statuses. In the end, we’ll see how they’ll impact on the calculation of the coverage status of a requirement in some specific version or Test Plan.

Whenever talking about statuses, we may be talking about statuses of requirements, Tests, Test Runs and Test Steps.

The status of a requirement depends on the status of the "related" Tests.

The status of a Test depends on the status of the "related" Test Runs, which in turn depend on the recorded Test Step statuses for each one of them.

Whenever we're speaking about the status associated with a requirement or with a Test (and even with a Test Set), we may be talking about different things:

The current page details the first one, i.e. the status of the entities based on the executions made in some context. The "Requirement Status", "TestRunStatus" and "Test Set Status" custom fields are calculated fields, usable in the context of requirement, Test and Test Set issues, respectively, that calculate the "status" (not the workflow status) of the requirement/Test/Test Set for a specific version, considering the executions (i.e., test runs) for that version. The version for which it calculates the status depends on the behavior defined in a global configuration (see configuration details here). These fields will show the calculated status for the respective entity, for some specific version; they're just used to have a quick glimpse of the status of each entity, for example right in the view issue screen; therefore their usage is limited. More information on these and other custom fields can be found here . |

The status of a Test tells you information about its current consolidated state (e.g. latest record result, if existent). Was it is executed? Successfully? In which version?

Thus, whenever speaking about the "status of a Test" we need to give it some additional context (e.g. "In which version?") since it depends on "where" and how you want to analyze it.

The status of a Test indicates its "latest state" in some given context (e.g. for some version, some Test Plan and/or in some Test Environment). |

Xray provides some built-in Test statuses (which can’t be modified nor deleted):

Each of this status maps to a requirement status, accordingly with the following table.

| Test status | Final status? | Requirement status mapped to |

|---|---|---|

| PASS | yes | OK |

| FAIL | yes | NOK |

| TODO | no | NOTRUN |

| ABORTED | yes | NOTRUN |

| EXECUTING | no | NOTRUN |

| custom | custom | OK, NOK, NOTRUN or UNKNOWN |

The status (i.e. result) of a Test Run is an attribute of the Test Run (a “Test Run” is an instance of a Test and is not a Jira issue) and is the one taken into account to assess the status of the requirement.

Do not mix up the status of a given test with the "TestRunStatus" custom field, which shows the status of a given Tests for a specific version, depending on the configuration under Configuring Statuses Custom Fields. More info on this custom field here. The "TestRunStatus" custom field is a calculated field that belongs to the Test issue and that takes into account several Test Runs; the "TestRunStatus" does not affect the calculation of the status of requirements. |

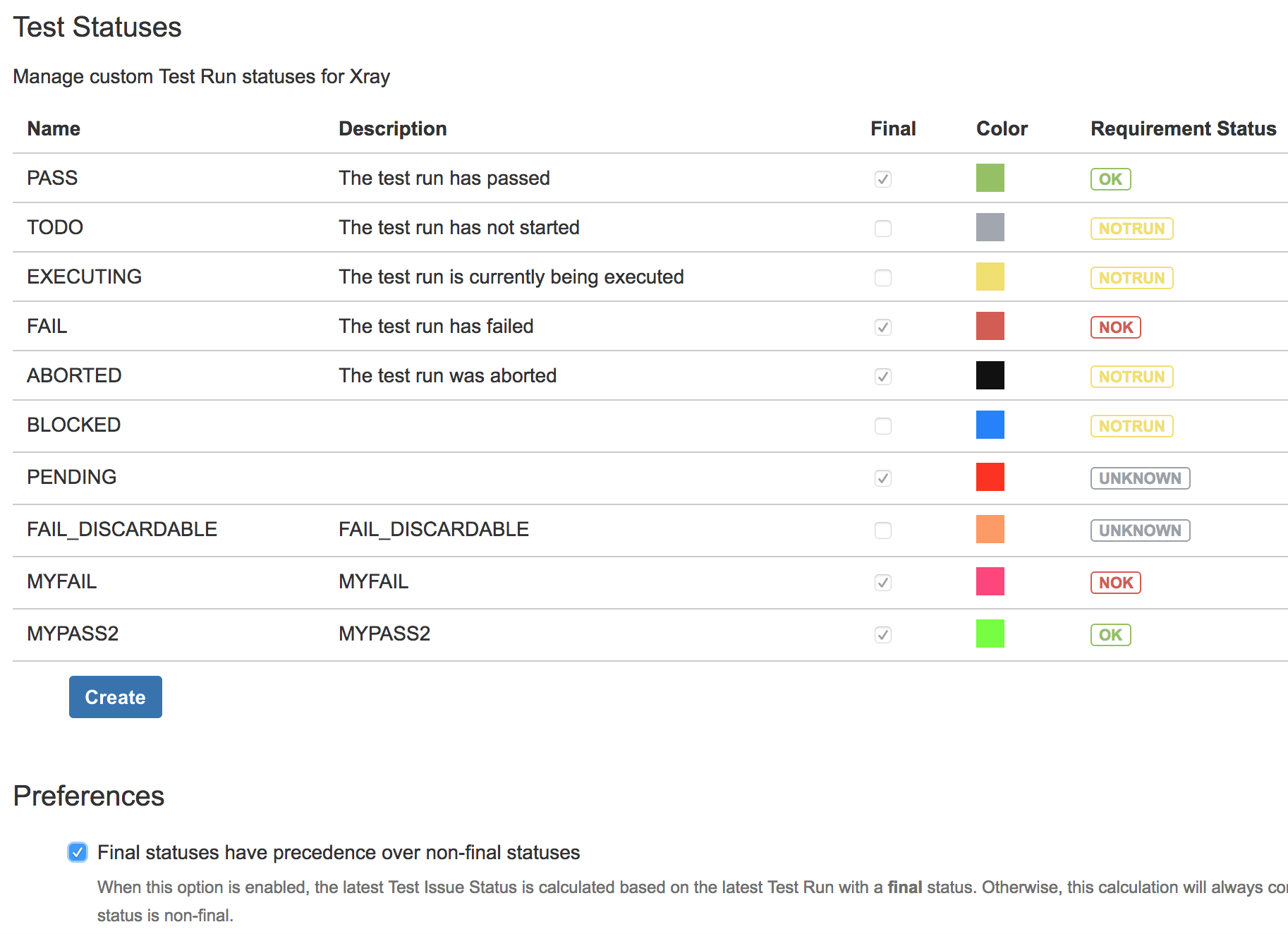

Creating new Test (Run) statuses may be done in the Manage Test Statuses configuration section of Xray.

Whenever creating/editing a Test status, we have to identify the Requirement status we want this Test status to map to.

One important attribute of a Test status is the “final” attribute. If “Final Statuses have precedence over non-final” flag is enabled, then Xray will give priority to final statuses whenever calculating the status of a Test. In other words, if you have a Test currently in some final status (e.g. PASS, FAIL) and you schedule a new Test Run for it, then this Test Run won't affect the calculation of the status of the Test.

This may be used, when users prefer to take into account only the last final/complete recorded result and want to discard Test Runs that are in an intermediate status (e.g. EXECUTING, TODO).

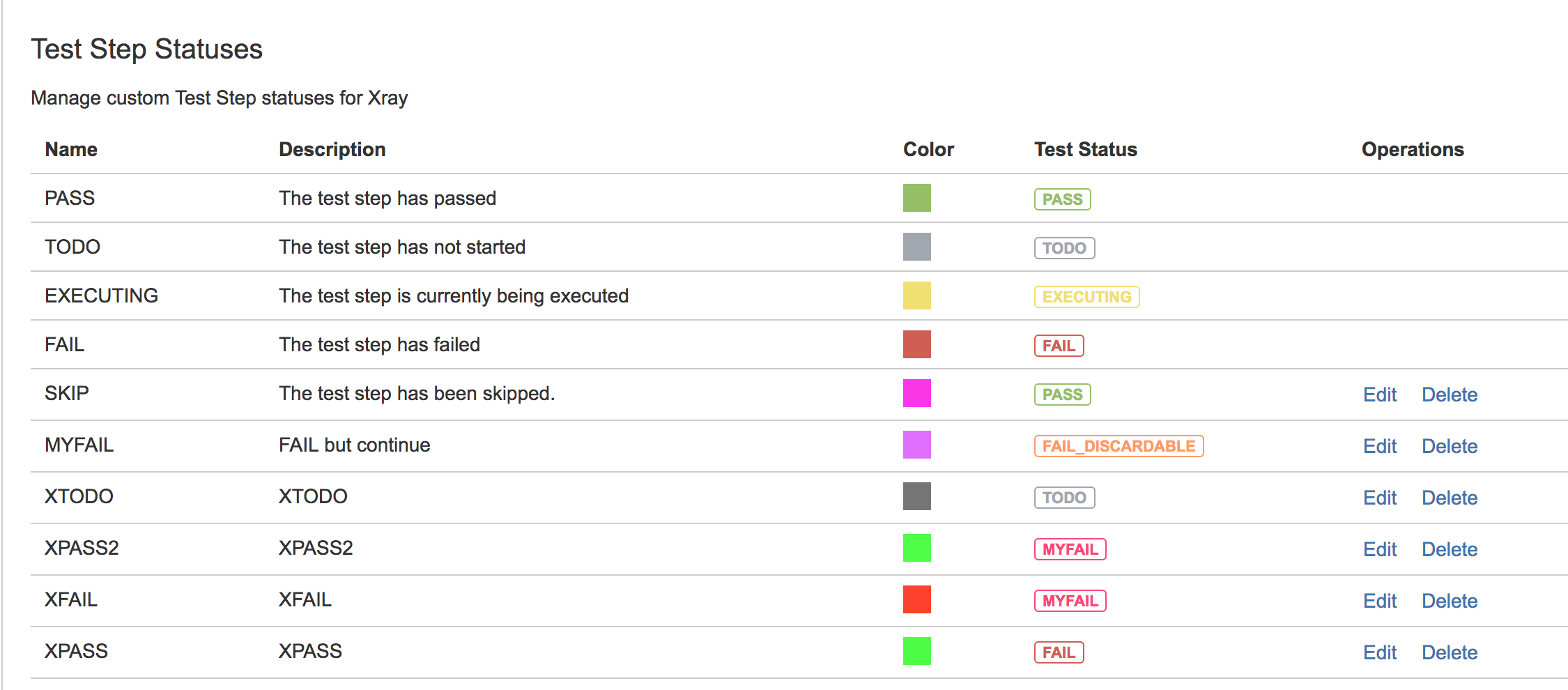

The status of a Test Step indicates the result obtained for that step for some Test Run.

Statuses reported at Test Step level will contribute to the overall calculation of the status of the related Test Run. |

Xray provides some built-in Test Step statuses (which can’t be modified nor deleted).

| Test Step status | Test status |

|---|---|

| PASS | PASS |

| TODO | TODO |

| EXECUTING | EXECUTING |

| FAIL | FAIL |

| custom | custom |

Creating new Test Step statuses may be done in the Manage Test Step Statuses configuration section of Xray.

Whenever creating/editing a Test Step status, we have to identify the Test status we want this step status to map to.

Note that native Test Step statuses can’t be modified nor deleted.

The status of a requirement tells you information about its current state, from a quality perspective. Is it covered with test cases? If so, has it been validated successfully? In which version?

Thus, whenever speaking about the "status of a requirement" we need to give it some additional context (e.g. "In which version?") since it depends on "where" and how you want to analyze it.

The status of a requirement indicates its coverage information along with its "state", depending on the results recorded for the Tests that do validate it. The status of a requirement is evaluated in some given context (e.g. for some version, some Test Plan and/or in some Test Environment). |

In Xray, for a given requirement, considering the default settings, its coverage status may be:

It’s not possible to create custom requirement statuses.

You can see that in order to calculate a requirement coverage status, for some specific system version, we “just” need to take into account the status of the related Tests for that same version. We’ll come back to this later on.

| Do not mix up the status of a given requirement with the "Requirement Status" custom field, which shows the status of a given requirement for a specific version, depending on the configuration under Custom Fields. More info on this custom field here. |

The status of a given Test Run is an attribute that is often calculated automatically based on the respective recorded step statuses. You can also enforce a specific status for a Test Run, which in turn may implicitly enforce specific step statuses (e.g., setting a Test Run as "FAIL" can set all steps as "FAIL").

This calculation is made by following these rules:

The order of the steps is irrelevant for the purpose of the overall Test Run status value.

Consequences:

The following table provides some examples given the Test Step Statuses configuration shown above.

| Example # | Statuses of the steps/contexts (the order of the steps/contexts is irrelevant) | Calculated value for the status of the Test Run | Why? |

|---|---|---|---|

| 1 |

| PASS | All steps are PASS, thus the joint value is PASS |

| 2 |

| EXECUTING | At least one step status (i.e. TODO) is mapped to a non-final Test status |

| 3 |

| FAIL | One of the step statuses (i.e. FAIL) has higher ranking than the other ones |

| 4 |

| FAIL | Since one of the steps is FAIL, then the run will be marked as FAIL. |

| 5 |

| FAIL | Since one of the steps is FAIL, then the run will be marked as FAIL. |

| 6 |

| FAIL | All mapped statuses map to a test status that in turn is associated to "NOK". Since one of them is FAIL, then the run will be marked as FAIL. |

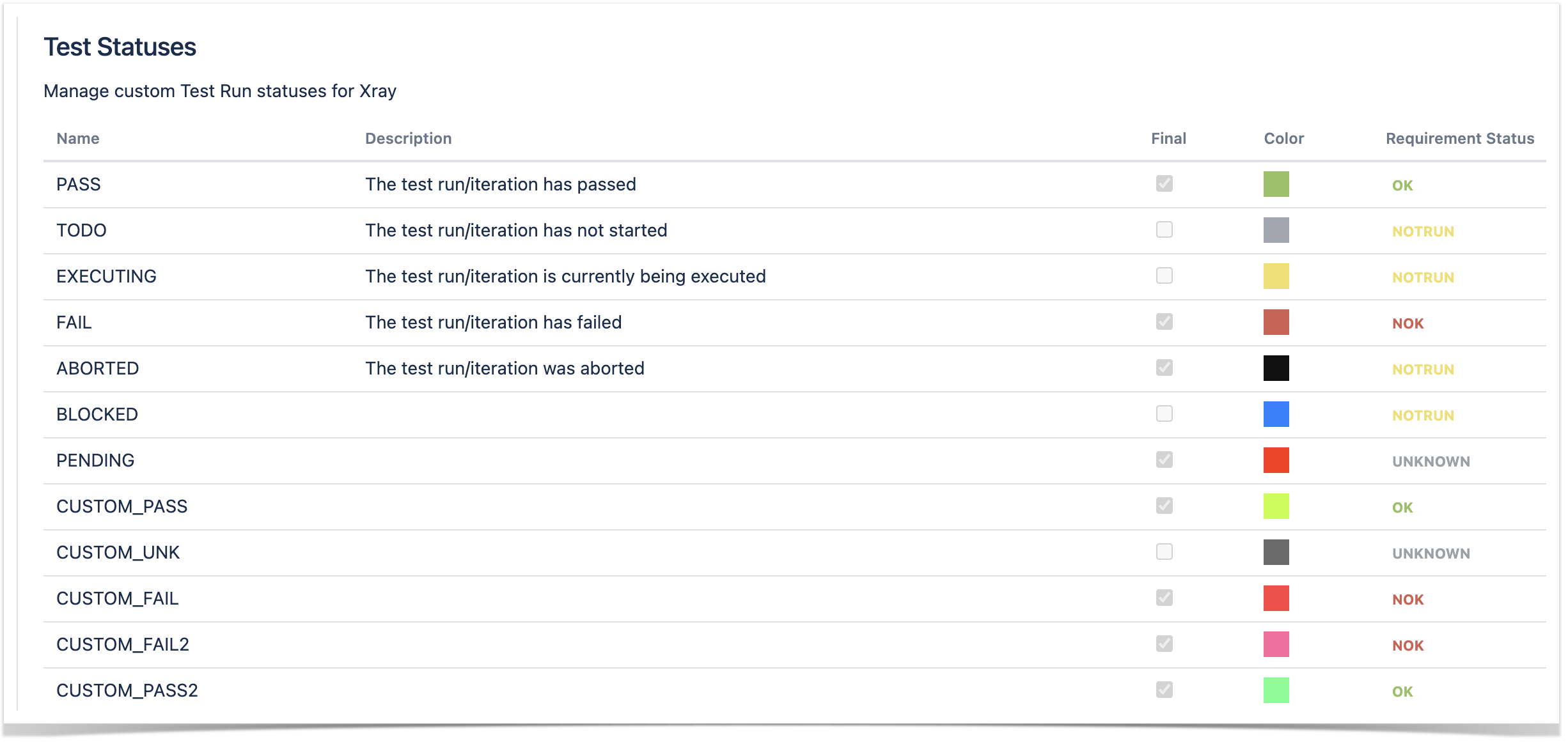

Let's consider the following configuration.

Example # | Statuses of the steps/contexts (the order of the steps/contexts is irrelevant) | Calculated value for the status of the Test Run | Why? |

|---|---|---|---|

| 1 |

| CUSTOM_PASS2 | We can see that both steps contribute in a "positive way" (i.e., they were successful as ultimately they are linked to successful coverage impact). Both statuses mapped to these test step statuses are associated with the "OK" coverage; as CUSTOM_PASS2 has higher ranking than CUSTOM_PASS, the run will be marked as "CUSTOM_PASS2". |

| 2 |

| CUSTOM_PASS2 | Similary to the previous example. Any status wins the "PASS" status. |

| 3 |

| CUSTOM_FAIL2 | We can see that both steps contribute in a "negative way" (i.e., they were not successful as ultimately they are linked to unsucessful coverage impact). Both statuses mapped to these test step statuses are associated with the "NOK" coverage; as CUSTOM_FAIL2 has higher ranking than CUSTOM_FAIL, the run will be marked as "CUSTOM_FAIL2". |

It is possible to calculate the status of a Test either by Version or Test Plan, in a specific Test Environment or globally, taking into account the results obtained for all Test Environments.

Analysis:

What affects the calculation:

The following table provides some examples given the Test Statuses configuration shown above in the Managing Test Statuses section.

| Example # | Statuses of the Test Runs (ordered by time of execution/creation, ascending) | Final statuses have precedence over non-final statuses | Calculated value for the status of the Test | Why? |

|---|---|---|---|---|

| 1a |

| true | PASS | Latest executed Test Run (2) having a final status was PASS. |

| 1b |

| false | TODO | Latest created Test Run (3) was TODO. |

| 2a |

| true | MYPASS2 | Latest executed final Test Runs on each environment were PASS, MYPASS2, and PASS respectively. Since MYPASS2 (2) has a higher ranking then the calculated status will be MYPASS2. |

| 2b |

| false | TODO | Latest created Test Runs on each environment were PASS, TODO, and PASS respectively. Since PASS has the lowest ranking, then TODO (3) will "win" and then the calculated status will be TODO |

| 3 |

| true | TODO | Latest created Test Runs on each environment were PASS, TODO, and PASS respectively. Although Test Environment "env2" has only a non-final Test Run, since there is no other Run for that environment, then it will be considered as the calculated status for that environment. Since PASS has the lowest ranking, then TODO (2) will "win" and then the calculated status will be TODO. |

| 4 |

| true (or false) | FAIL | Latest executed (or created) final Test Runs on each environment were PASS, FAIL, and PASS respectively. Since the calculated status for one of the environments is FAIL, then the calculated status will be FAIL. |

| 5 |

| true | MYPASS2 | Latest executed final Test Runs on each environment were PASS, MYPASS2, and MYFAIL respectively. MYPASS2 has a higher ranking than the other ones, thus the overall calculated value will be MYPASS2. |

| 6 |

| false | MYFAIL | Latest created Test Runs on each environment were PASS, TODO, and MYFAIL respectively. MYFAIL has a higher ranking than the other ones, thus the overall calculated value will be MYFAIL. |

It is possible to calculate the status of a Requirement either by Version or Test Plan, in a specific Test Environment or globally, taking into account the results obtained for all Test Environments.

Analysis :

The algorithm is similar to the overall calculation of the Test status taking into account the results obtained for different Test Environments.

In other words, the status for each linked and "relevant" Test case is calculated and in the end a joint calculation is done for a virtual Test case. The requirement status will correspond to the mapped value for the status that was calculated for this virtual Test.

The Tests that will be considered as covering the requirement are not just the ones directly linked to the requirement. In fact, they may either be direct ones or ones linked to sub-requirements. This list can be further restricted if Test Sets are being used for defining the scope of coverage (i.e. the list of Tests relevant for the coverage calculation for some version).

Algorithm :

What affects the calculation:

When a requirement has some sub-requirements, then the calculated status for the parent requirement depends not only on its status calculated per-si but also on the status of each individual sub-requirement.

The calculation follows the rules described in the following table.

REQ \ SUB-REQ | OK | NOK | NOT RUN | UNKNOWN | UNCOVERED |

|---|---|---|---|---|---|

| OK | OK | NOK | NOT RUN | UNKNOWN | OK |

| NOK | NOK | NOK | NOK | NOK | NOK |

| NOT RUN | NOT RUN | NOK | NOT RUN | UNKNOWN | NOT RUN |

| UNKNOWN | UNKNOWN | NOK | UNKNOWN | UNKNOWN | UNKNOWN |

| UNCOVERED | OK | NOK | NOT RUN | UNKNOWN | UNCOVERED |

From another perspective, you would obtain the same value for the calculation of the status of the parent requirement if you consider that it is being covered by all the explicitly linked Tests and also the ones linked to sub-requirements.

Consequences :

Even if you have sub-requirements, when you have tests that are directly linked to the parent requirement, Xray assumes that you are validating the requirement directly. Thus, it's irrelevant if the sub-requirements are uncovered by tests. |

The following table provides some examples given the Test Statuses configuration shown above in the Managing Test Statuses section.

| Example # | Statuses of the related Tests (sub-requirements, whenever are present using the notation subReqX) | Calculated value for the status of the requirement | Why? |

|---|---|---|---|

| 1 |

| OK | All Tests are passed (it is similar to having just one virtual test that would be considered PASS and thus mapped to the OK status of the requirement) |

| 2 |

| NOT RUN | One of the Tests (3) is TODO, which has higher ranking than PASS. |

| 3 |

| NOK | One of the Tests (3) is FAIL, which has higher ranking than PASS. |

| 4 |

| NOK | One of the Tests (3b) is FAIL, thus subReq2 will be considered as NOK. Since it is NOK, then the parent requirement status will be NOK. |

| 5 |

| NOT RUN | One of the subRequirements (subReq1) is NOT RUN, thus the calculated status, whenever doing the conjunction with the parent requirement status, will be NOT RUN. |

| 6 |

| OK | Since all sub-requirements are uncovered and the parent requirement is covered directly by one Test (1), which is currently PASS, then the calculated "OK" status will be based on that Test. |